Their Fears Made Real

Recently, the SJW’s at the Verge were whining about recent advances in facial recognition in an article called: The invention of AI ‘gaydar’ could be the start of something much worse.

No, I’m not going to link to it.

Why whining?

As you might have guessed, it’s not as straightforward as that. (And to be clear, based on this work alone, AI can’t tell whether someone is gay or straight from a photo.) But the research captures common fears about artificial intelligence: that it will open up new avenues for surveillance and control, and could be particularly harmful for marginalized people. One of the paper’s authors, Dr Michal Kosinski, says his intent is to sound the alarm about the dangers of AI, and warns that facial recognition will soon be able to identify not only someone’s sexual orientation, but their political views, criminality, and even their IQ.

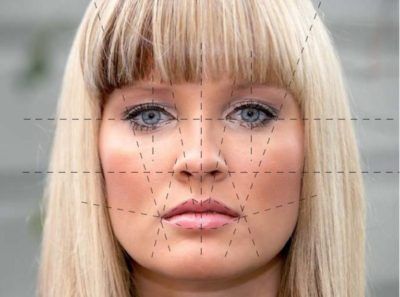

With statements like these, some worry we’re reviving an old belief with a bad history: that you can intuit character from appearance. This pseudoscience, physiognomy, was fuel for the scientific racism of the 19th and 20th centuries, and gave moral cover to some of humanity’s worst impulses: to demonize, condemn, and exterminate fellow humans. Critics of Kosinski’s work accuse him of replacing the calipers of the 19th century with the neural networks of the 21st, while the professor himself says he is horrified by his findings, and happy to be proved wrong. “It’s a controversial and upsetting subject, and it’s also upsetting to us,” he tells The Verge.

OK.

The article then goes on to point out that most of the headlines were misleading (go figure, the malstream media get something right?) and that the study wasn’t about a system that could look at a person walking by, grab a snapshot, and determine if they were gay.

On the face of it, this sounds like “AI can tell if a man is gay or straight 81 percent of the time by looking at his photo.” (Thus the headlines.) But that’s not what the figures mean. The AI wasn’t 81 percent correct when being shown random photos: it was tested on a pair of photos, one of a gay person and one of a straight person, and then asked which individual was more likely to be gay. It guessed right 81 percent of the time for men and 71 percent of the time for women, but the structure of the test means it started with a baseline of 50 percent — that’s what it’d get guessing at random. And although it was significantly better than that, the results aren’t the same as saying it can identify anyone’s sexual orientation 81 percent of the time.

Sure. But why are they so afraid, and why all this effort to downplay it, as later in the article they point out the researchers used facial structure “but there’s no proof” that makeup, expression, etc. weren’t factors.

Buried in all of this white-washing is the fact that, if this study is repeatable, and yes, subject to “bell curve” distributions, there are statistical differences in the average faces between people who are straight, and those who are not. And while we are at it, that at least some of the differences observed in physiognomy, and the concept that differences in biological makeup exist and matter and extend beyond just speed, height, strength (especially insomuch as much as feminists hate admitting the last couple) may be truer than is politically desirable.

Weren’t gay activists the ones advocating that they were “born this way”? Or are they scared that the supposedly internal-only differences (that are also just a social construct, remember) actually extend to actual biological differences in growth? I think you’ll find a common reply along the lines of “but now people can discriminate against gay people before they even meet them by coming up with some other excuse.”

Because it’s not high school and SJW drama that’s all about backstabbing and passive-agressive takedowns.

While we are at it – so what if “expression” affected the perceived structure? It’s a common joke how people’s faces and their personalities begin to match their dogs. Even if the facial expressions are “tainting” the results, if the results are accurate, who cares? In engineering, not understanding why something works can sometimes turn around and bite you in the ass, but if you’ve managed to repeatably prove something does work, and its limits, it’s still a useful tool.